It is an accepted truth (trust me, I am a professional), that security is often seen as just a technical profession; firewalls, DLP, DMARC, SFTP and TLAs (Three Letter Acronyms)are thrown around with gay abandon. Being resilient is a matter of hardening the OS, having a SOC fully staffed, and running the industry’s latest SIEM services. CISOs should be technical and know all of the TPLAs (Three Plus Letter Acronyms) having spent their formative years in their Mother’s basement while they hacked the Pentagon/GCHQ/Kremlin.

It may surprise you that I dislike this approach and viewpoint.

I found a wonderful quote on (where else?) the internet that, unfortunately, I cannot attribute to anyone. So, if you know where this comes from, please do tell me:

“People aren’t the weak link in security; they are the ONLY link.”

(Unknown)

Information security is primarily a people industry. Technology isn’t a panacea but merely an accelerant and amplifier of the existing processes and solutions. Without the people, there is no information to secure in the first place. If we, as CISOs and business leaders, don’t embrace and support our people, we make our jobs so much more problematic when securing the business and helping it do more, sell more, and create more.

So, in my usual style, here are the three things I suggest everyone who has “people” in their business and is responsible for education in one form or another should bear in mind.

Crowd Sourcing

So many of us (I know I did for the longest time) overlook the rather undeniable fact that having many people means they can all carry a small part of the security load. Crowdsourcing works because many people put a small amount of something in to help someone else build something big. You can make this approach work for you in several different ways.

Firstly, approach certain people to be “super contributors” to your infosec crowdsourced campaign. These are the folks that are your primary eyes and ears on the ground, the folks that people go to when they have an immediate problem. Think of them as the cyber first-aiders, if you will, with a few of them dotted around each floor or department.

Give them some face-to-face training if you can or at the least some detailed role briefing notes. They are doing this role because, like first-aiders, they want to help people and be a part of the solution. Reward them with a token monetary compensation, some swag, recognition or whatever fits into your organisational culture.

Secondly, the rest of the people in the organisation can also be encouraged to play a part; connect their ability to spot phishing, social engineering, reporting incidents and breaches to their role in the organisation and its successes. Finally, make it fun (see below), make it engaging and make it educational.

Doing that is, of course, an essential subject in of itself, but the real message here is to embrace what you might see as your biggest weakness as your biggest strength. Making this leap of faith in your mind means your approach to training, problem-solving, and how you address the people in your organisation changes to positive and collaborative rather than cynical and combative.

Story Telling

Storyteller is probably the second oldest profession in the world; we can easily imagine stories being told from one generation to the next around the campfire. But, before the written word was used, it was vital before Grandpa died that he told us the secret to successfully hunting that particular breed of rabbit/buffalo/mammoth (depending upon what part of the world you came from).

And yet we can also imagine that after hearing the same story over and over again, night after night, while Grandpa gets slowly drunk on his fermented yak’s milk becomes quite tedious. His tales of daring-do and athletic ardour, as he leapt onto the back of the killer rabbit, became very tiresome after the 954th time. And then last night, as he was getting carried away with the demonstration of his rabbit chokehold, he broke wind. Not only was that the version of the story you passed on to your children, but it was also the birth of the third oldest profession: Comedian (probably).

I am a huge fan of humour in the workplace, especially when it comes to educating people; a good joke conjures up images, feelings, experiences, and smells. But, above all, it is a story. Stories help people create worlds in their minds, relate their experiences to those worlds, and establish a visceral feeling in their bodies, an actual chemical change. Of course, there are few guarantees in this world. Still, one I pass on with a cast-iron guarantee is that no positive, memory-creating chemical changes in any brain anywhere in the world were created by putting people in a room and shouting PowerPoint at them for an hour.

The lesson here is that a good story goes a long way to helping people retain the information; build your message with a strong start, a fantastic middle and a resounding end, and you have the makings of impactful and memorable education.

Don’t Stop

“Oh no, it is that time of year again; we must do our security training”.

Don’t be this company. If you do something once a year because you have to, it becomes an obstacle, something that needs to be completed quickly and with as little effort so you can get on with the fun stuff.

If educational activities in the rest of our lives are continual activities, then why do we not apply this to our infosec training? First, of course, it is not an educational experience that people have opted into, but keeping a cadence to the activities that go beyond just one activity works. Ensuring the format changes and evolves, so it isn’t just posters all year round but lunch and learns, videos, emails, intranet, competitions, and the like means people who struggle to learn in one format can pick it up in another and keeps them on their toes, wondering what the next activity is. It piques their interest and keeps them engaged.

Try creating a 24-month schedule of activities and subjects; it’s not easy, but even having that schedule open and visible allows you to think much more long-term rather than just at a compliance, box-ticking level. Of course, you can still do quizzes (so many auditors and standards require that kind of box-ticking, unfortunately), but by avoiding the one-shot PowerPoint training and ten easy-to-guess questions, you are keeping the content new and fresh. You are also building a reputation as someone who cares about the educational process and the positive outcomes it brings, not just ticks in boxes.

Wrestling Rabbits can be fun AND educational.

Links to other interesting stuff on the web (affiliate links)

Five Key Dark Web Forums to Monitor in 2023

What is Cybersquatting? The Definitive Guide for Detection & Prevention

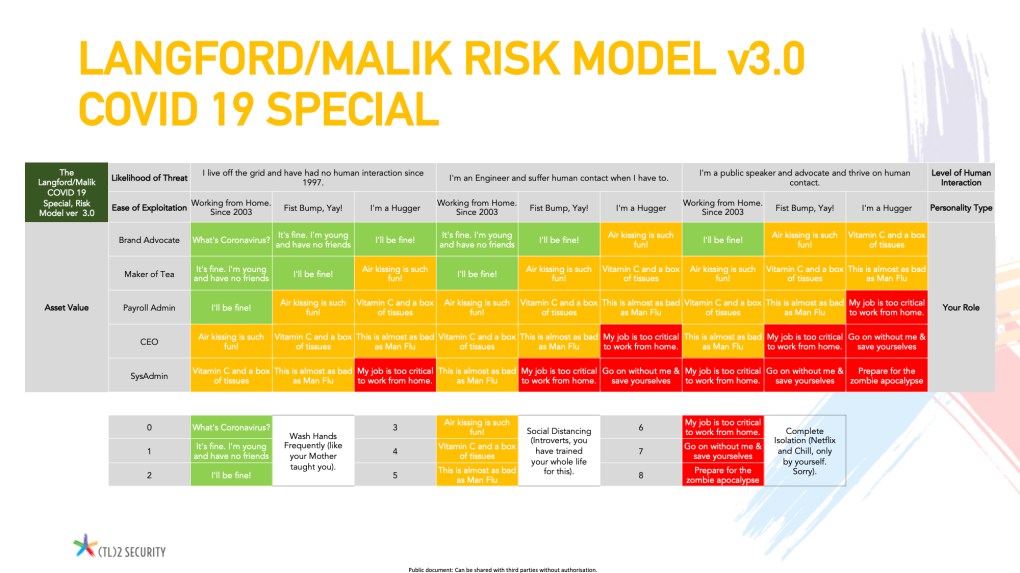

Many, many moons ago, my good friend and learned colleague Javvad Malik and I came up with a way to explain how a risk model works by using an analogy to a pub fight. I have used it in a presentation that has been given several times, and the analogy has really helped people understand risk, and especially risk appetite more clearly (or so they tell me).

Many, many moons ago, my good friend and learned colleague Javvad Malik and I came up with a way to explain how a risk model works by using an analogy to a pub fight. I have used it in a presentation that has been given several times, and the analogy has really helped people understand risk, and especially risk appetite more clearly (or so they tell me).