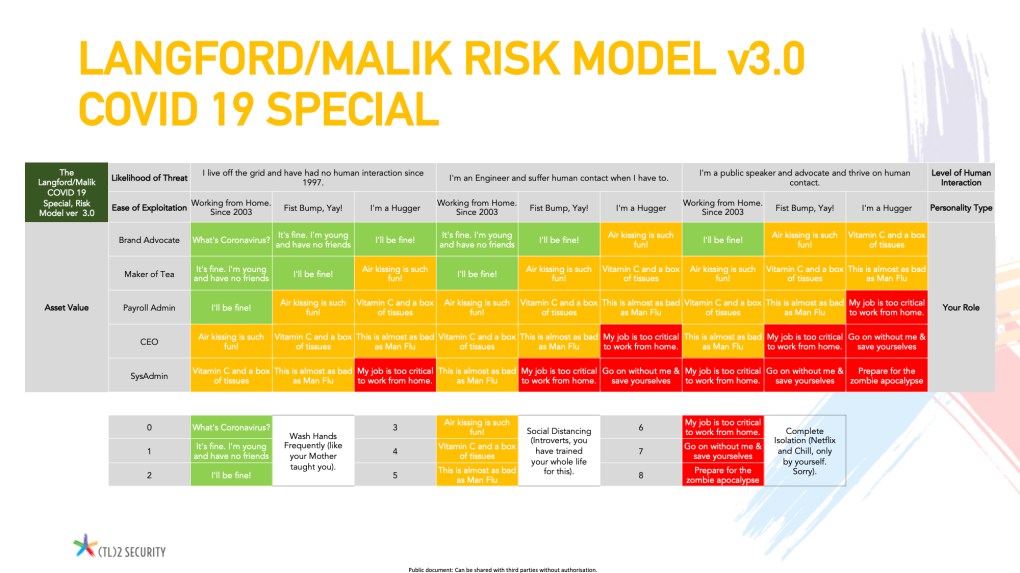

<updated with missing risk matrix image>

Risk is a topic that I like to talk about a lot, mainly because I managed to get it ‘wrong’ for a very long time, and when I finally did realise what I was missing, everything else I struggled with fell into place around it. For me, therefore, Risk is the tiny cog in the big machine that, if it is not understood, greased and maintained, will snarl up everything else.

In the early days of my career, risk was something to be avoided, whatever the cost. Or rather, it needed to be Managed, Avoided, Transferred or Accepted down to the lowest possible levels across the board. Of course, I wasn’t so naive as to think all risks could be reduced to nothing, but they had to be reduced, and “accepting” a risk was what you did once it had been reduced. Imagine my surprise that you could “accept” a risk before you had even treated it!

There are many areas of risk that everyone should know before they start their risk management programme in whatever capacity they are in, but here are my top three:

Accepting the risk

If you want to know how not to accept a risk, look no further than this short music video (which I have no affiliation with, honestly). Just accepting something because it is easy and you get to blame your predecessor or team is no way to deal with risks. Crucially, there is no reason why high-level risks cannot be accepted, as long as whoever does it is qualified to do so, cognizant of the potential fallout, and senior enough to have the authority to do so. Certain activities and technologies are inherently high risk; think of AI, IoT or oil and politics in Russia, but that doesn’t mean you should not be doing those activities.

A company that doesn’t take risks is a company that doesn’t grow, and security risks are not the only ones that are being managed daily by the company leadership. Financial, geographic, market, people, and legal risks are just some things that need to be reviewed.

Your role as the security risk expert in your organisation is to deliver the measurement of the risks clearly as possible. That includes ensuring everyone understands how the score is derived, the logic behind it and the implications of that score. This brings us neatly to the second “Top Tip”:

Measuring the risk

Much has been written about how risks should be measured, quantitatively or qualitatively, for instance, financially or reputationally. Should you use a red/amber/green approach to scoring it, a percentage, or figure out of five? What is the best way to present it? In Word, Powerpoint or Excel? (Other popular office software is available.)

The reality is that, surprisingly, it doesn’t matter. What matters is choosing an approach and giving it a go; see if it works for you and your organisation. If it doesn’t, then look at different ways and methods. Throughout it all, however, it is vital that everyone involved in creating, owning and using the approach knows precisely how it works, what the assumptions are, and the implications of decisions being made from the information presented.

Nothing exemplifies this more than the NASA approach to risk. Now NASA, having the tough job of putting people into space via some of the most complicated machines in the world, would have a very rigorous, detailed and even complex approach to risk; after all, people’s lives are at stake here. And yet, their risk matrix comprises a five-by-five grid with probability on one axis and consequence on the other. The grid is then scored Low-Medium or High:

Seriously. That’s it. It doesn’t get much simpler than that. However, a 30-page supporting document explains precisely how the scores are derived, how probability and consequence should be measured, how the results can be verified, and so on. The actual simple measurement is different from what is important. It is what is behind it that is.

Incidents and risk

Just because you understand risk now, you may still need to be able to predict everything that might happen to you. For example, “Black Swan” events (from Nicholas Nasim Taleb’s book of the same name) cannot be predicted until they are apparent they will happen.

By this very fact, creating a risk register to predict unpredictable, potentially catastrophic events seems pointless. However, that differs from how an excellent approach to risk works. Your register allows you to update the organisational viewpoint on risk continuously. This provides supporting evidence of your security function’s work in addressing said risks and will enable you to help define a consensual view of the business’s risk appetite.

When a Black Swan event subsequently occurs (and it will), the incident response function will step up and address it as it would any incident. Learning points and advisories would be produced as part of the documented procedures they follow (You have these, right?), including future areas to look out for. This output must be reviewed and included in the risk register as appropriate. The risk register is then reviewed annually (or more frequently as required), and controls are updated, added or removed to reflect the current risk environment and appetite. Finally, the incident response team will review the risk register, safe in the knowledge it contains fresh and relevant data, and ensure their procedures and documentation are updated to reflect the most current risk environment.

Only by having an interconnected and symbiotic relationship between the risk function and the incident response function will you benefit most from understanding and communicating risks to the business.

So there you have it, three things to remember about risk that will help you not only be more effective when dealing with the inevitable incident but also help you communicate business benefits and support the demands of any modern business.

Risk is not a dirty word.

Links to other interesting stuff (affiliate links)

Building a Modern Exposure Management Program

Access Controls that Move – The Power of Data Security Posture Management

Vulnerabilities and Insights: A Look at Cybersecurity Challenges

Many, many moons ago, my good friend and learned colleague Javvad Malik and I came up with a way to explain how a risk model works by using an analogy to a pub fight. I have used it in a presentation that has been given several times, and the analogy has really helped people understand risk, and especially risk appetite more clearly (or so they tell me).

Many, many moons ago, my good friend and learned colleague Javvad Malik and I came up with a way to explain how a risk model works by using an analogy to a pub fight. I have used it in a presentation that has been given several times, and the analogy has really helped people understand risk, and especially risk appetite more clearly (or so they tell me).